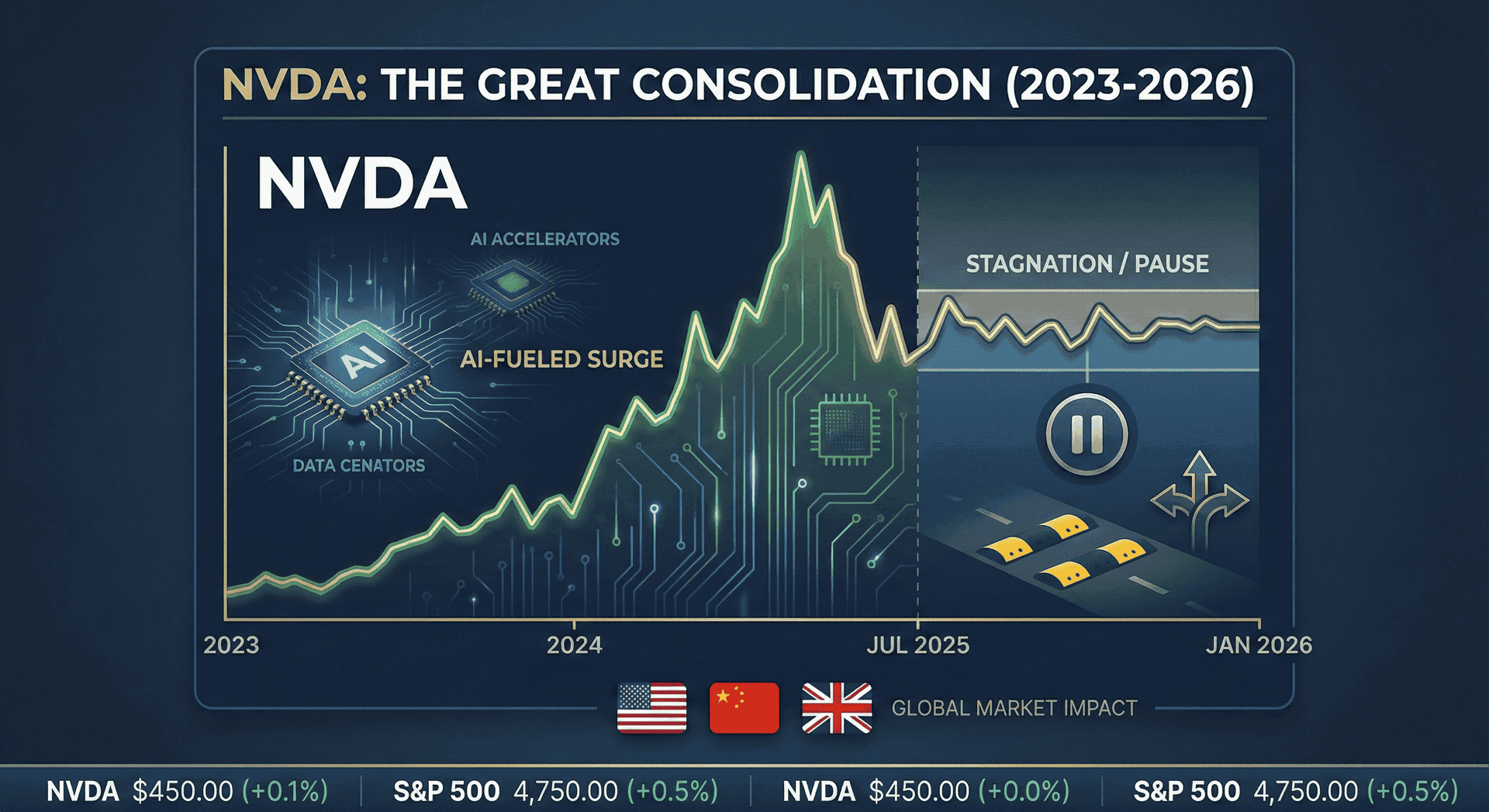

Nvidia Stock Stuck Since July - Why Wall Street's 5 Trillion Dollar AI Giant Has Suddenly Hit the Brakes in 2026

After soaring 1000 percent in five years, Nvidia stock has flatlined since summer 2025. Discover why the world's largest company faces critical questions about valuation.

The 187 Billion Dollar Question: Why Has Nvidia's Miracle Run Suddenly Stalled?

In the sprawling landscape of United States stock markets, few stories have captured investor imagination quite like Nvidia's meteoric ascent. From a 187 dollar share price in early 2023 to briefly touching 212 dollars in late 2025, the company transformed from a gaming chip maker into the undisputed emperor of artificial intelligence infrastructure. Nvidia became the world's most valuable company, eclipsing even Apple and Microsoft with a market capitalization that briefly surpassed 5 trillion dollars. Yet as January 2026 begins, investors staring at their portfolios are confronting an uncomfortable reality. Nvidia stock sits at roughly 189 dollars per share, almost exactly where it traded in July 2025. Six months of sideways movement for a company that had previously doubled annually feels less like a pause and more like a verdict. The question reverberating through trading floors from New York to London to Hong Kong is deceptively simple but impossibly complex: What happens next?

The Summer That Changed Everything

Nvidia shares have remained relatively flat since the summer of 2025, with investors increasingly worried about whether spending on artificial intelligence chips has reached a peak. This stagnation represents a dramatic shift for a stock that had become synonymous with unstoppable momentum. Throughout 2023 and 2024, Nvidia stock more than doubled each year, driven by insatiable demand for its graphics processing units that power everything from ChatGPT to autonomous vehicles. The company reported quarterly revenue growth rates exceeding 200 percent, profit margins that made even the most successful tech giants envious, and order backlogs stretching years into the future. By any conventional metric, Nvidia was not just winning but dominating its market with a ferocity rarely seen in technology history.

Then came the summer of 2025, and something fundamental changed. The stock has delivered approximately 34.84 percent gains over the past year, with a 52-week trading range between 86.62 dollars and 212.19 dollars. While a 34 percent annual return would delight most investors, for Nvidia shareholders accustomed to triple-digit gains, it feels like stagnation. More concerning than the percentage is the pattern. After reaching its 52-week high above 212 dollars, the stock retreated and has oscillated in a narrow band ever since, unable to break decisively higher despite continued strong earnings reports and optimistic guidance from management. The enthusiasm that once sent the stock soaring on any positive news has been replaced by a cautious skepticism, a collective holding of breath as the market tries to determine whether this is a healthy consolidation before the next leg higher or the beginning of something more ominous.

The Valuation Paradox: Too Expensive or Just Expensive Enough?

At the heart of Nvidia's stagnation lies a mathematical puzzle that has divided Wall Street's most sophisticated analysts. Nvidia currently trades at a price-to-earnings ratio of approximately 46 to 48, which sits well above the market average. To understand why this matters, consider what a P/E ratio represents. It tells investors how much they are paying for each dollar of earnings the company generates. A P/E of 46 means investors are paying 46 dollars for every dollar of profit Nvidia produces. The SP 500 index as a whole trades at a P/E around 25, meaning Nvidia commands nearly double the market's average valuation multiple.

Bulls argue this premium is entirely justified. Nvidia is projected to grow earnings by 57 percent in fiscal 2026, with analysts forecasting earnings of 4.69 dollars per share this year and 7.46 dollars per share in fiscal 2027. If a company can genuinely grow profits at 57 percent annually while maintaining industry-leading margins, a P/E of 46 is not expensive but reasonable, perhaps even cheap. The skeptics counter with a different calculus. Nvidia is now one of the largest companies in the world by revenue with over 50 billion dollars in quarterly sales, and it cannot maintain 62 percent year-over-year revenue growth forever at this scale. There simply is not enough global capital capable of making the massive upfront investments into Nvidia chips indefinitely. Eventually, supply will meet demand, growth will slow, and when it does, a P/E ratio nearly double the market average will look dangerously exposed.

The valuation debate is further complicated by Nvidia's positioning within the broader market context. Nvidia's P/E ratio is currently below its three-year average of 66.93 and its five-year average of 65.59, which suggests the stock has actually become cheaper relative to its own historical norms. Yet those historical comparisons may be misleading. During much of that period, Nvidia was transitioning from a gaming company to an AI infrastructure provider, justifying a higher multiple through a genuine transformation narrative. In 2026, that transformation is complete. Nvidia is no longer a company with potential, it is a company delivering at scale. The question is whether the current valuation prices in perfection, leaving little room for error.

China's H200 Gambit: Geopolitics Meets Silicon

While valuation concerns simmer beneath the surface, a more immediate drama is unfolding across the Pacific Ocean that could dramatically reshape Nvidia's 2026 trajectory. Nvidia has informed Chinese clients it aims to start shipping H200 AI chips to China before the Lunar New Year holiday in mid-February, with initial shipments expected to total between 5,000 to 10,000 chip modules, equivalent to approximately 40,000 to 80,000 H200 chips. This development represents one of the most significant shifts in United States technology policy toward China in years, and for Nvidia, it could mean the difference between meeting or missing revenue expectations in 2026.

The H200 chip sits at the center of a geopolitical tug-of-war that has reshaped the global semiconductor industry. Under the Biden administration, the United States effectively banned the export of advanced AI chips to China, citing national security concerns about military applications. Nvidia responded by creating the H20, a deliberately downgraded chip designed to comply with export restrictions while still serving the Chinese market. But the H20 proved to be a poor substitute, forcing Chinese tech giants like Alibaba, ByteDance, and Tencent to make do with chips offering a fraction of the performance they needed. Now, under the Trump administration, a policy reversal has opened the door for H200 exports, though with a significant catch. Washington will allow H200 sales to China provided a 25 percent fee is paid to the United States government.

The strategic implications are enormous. For Chinese technology companies, the H200 represents approximately six times the performance of the H20 chips they have been forced to use. This performance gulf is not incremental, it is transformational, potentially enabling Chinese firms to build AI models and applications that would have been impossible with restricted hardware. Chinese tech giants Alibaba and ByteDance have reportedly placed orders for more than 2 million H200 units for delivery throughout 2026. If these orders materialize, they would represent billions of dollars in revenue for Nvidia, providing a crucial cushion as the company navigates supply constraints and competitive pressures elsewhere.

Yet nothing about the China situation is certain. Beijing has not yet approved any H200 purchases, and Chinese officials are reportedly weighing whether to allow shipments at all, with one proposal requiring each H200 purchase to be bundled with a specified ratio of domestically produced chips. China finds itself caught between competing imperatives. Its technology sector desperately needs access to cutting-edge AI hardware to remain competitive globally, yet allowing large-scale imports of American chips undermines the massive investments Beijing has made in developing domestic semiconductor capabilities. Companies like Huawei have poured billions into creating alternatives like the Ascend 910C chip, which attempts to match the H200's capabilities. Approving large H200 imports might provide short-term relief but could slow the progress toward the self-sufficiency that Chinese leadership considers essential for long-term national security.

Blackwell's Impossible Math: Record Demand Meets Physical Reality

If the China situation represents geopolitical complexity, Nvidia's Blackwell chip architecture embodies a different kind of challenge: the limits of manufacturing at the bleeding edge of physics. Nvidia CEO Jensen Huang has confirmed that the company's Blackwell B200 and GB200 chips are completely sold out through mid-2026, with industry reports indicating a staggering backlog of 3.6 million units from the world's largest cloud providers. This is not the kind of backlog that accumulates from weak sales or missed targets. This is demand so intense that it has outstripped even Nvidia's most aggressive production ramps, creating a situation where being the dominant player in the market paradoxically becomes a constraint rather than an advantage.

The Blackwell architecture represents a generational leap in AI computing capability. Nvidia claims Blackwell achieves the highest performance and best overall efficiency in independent benchmarks while delivering 10 times the throughput per megawatt compared to the previous Hopper generation. For hyperscale cloud providers like Microsoft, Amazon, Google, and Meta, this means they can train larger AI models faster while consuming less electricity and occupying less data center space. In an industry where competitive advantage is measured in training time and inference speed, access to Blackwell chips has become a strategic imperative. Companies without Blackwell allocations find themselves competing with outdated hardware, a potentially fatal disadvantage in the cutthroat world of frontier AI development.

The sell-out status has created a bifurcated industry. Startups that secured early Blackwell allocations have seen their valuations skyrocket, while those stuck on older H100 clusters find it increasingly difficult to compete on inference speed and cost. Oracle briefly became one of the best-performing tech stocks of 2025 by specializing in rapid-deployment Blackwell clusters for mid-sized AI labs. Meanwhile, companies like Meta have been forced to delay major AI initiatives, waiting months for chip deliveries that would have arrived in weeks during previous product cycles. The shortage has effectively transformed high-end computing capacity into a strategic resource comparable to oil reserves in the 20th century, with nations and corporations alike scrambling to secure their share before rivals.

Yet selling out your inventory is not always the blessing it appears to be for a publicly traded company. When every chip you can manufacture is spoken for months in advance, you lose the ability to exceed expectations through higher volumes. Nvidia's Q4 2025 guidance, while strong, came in roughly where analysts expected rather than dramatically above, a pattern that has become increasingly common. By extending H200 production through 2026, Nvidia is attempting to bridge the scarcity gap created by Blackwell's massive backlog and de-risk its revenue outlook. The company is essentially running two parallel production lines, churning out last-generation H200 chips alongside cutting-edge Blackwell units, a strategy that maximizes revenue but also reveals the limits of manufacturing capacity at TSMC's fabrication facilities in Taiwan.

The Memory Crisis: When Success Breeds Bottlenecks

Nvidia's challenges extend beyond chip design and geopolitics into the mundane but critical world of computer memory. The sustained demand for both H200 and Blackwell chips has triggered a historic supply crunch for High Bandwidth Memory, with SK Hynix and Micron Technology signaling that their 2025 HBM3e capacity is fully committed. High Bandwidth Memory is the specialized, extremely expensive memory that sits directly on AI accelerator chips, allowing them to process massive datasets at speeds that would be impossible with traditional memory configurations. Without sufficient HBM supply, even the most advanced chip design becomes irrelevant.

The HBM shortage has ripple effects throughout Nvidia's product lineup. Reports indicate Nvidia may reduce production of its GeForce RTX 50 series gaming graphics cards by 30 to 40 percent in early 2026 due to GDDR7 memory supply constraints. While gaming GPUs use different memory than data center chips, both compete for manufacturing capacity at Samsung, SK Hynix, and Micron. When memory suppliers allocate more production lines to HBM3e for AI chips, less capacity remains for GDDR7 used in gaming products. Nvidia appears to be making the calculation that a dollar of data center revenue is worth more than a dollar of gaming revenue, both in absolute terms and in market perception. Sacrificing some gaming card supply to ensure maximum AI chip production makes strategic sense but also highlights the zero-sum tradeoffs the company now faces.

SK Hynix and Samsung Electronics are reportedly planning a 20 percent price hike for HBM3e memory in early 2026, capitalizing on Nvidia's need to equip both H200 and Blackwell systems with massive amounts of memory. This price increase will pressure Nvidia's legendary profit margins. Throughout 2024 and 2025, the company maintained gross margins above 70 percent, a level almost unheard of in hardware manufacturing. As component costs rise and competition intensifies, those margins face inevitable compression. The question is whether Nvidia can offset rising input costs through higher chip prices to customers or increased sales volumes, or whether investors should prepare for margin erosion that would make the current valuation look even more stretched.

The Competition That Everyone Sees Coming

No discussion of Nvidia's 2026 prospects would be complete without addressing the elephant in every boardroom: competition. Nvidia's profit margins could fall once the company loses its pricing power, especially if competition keeps rising from Alphabet's TPU chip and Amazon's Trainium chip. For years, Nvidia has enjoyed a near-monopoly in AI chips, with estimated market share exceeding 90 percent in data center GPUs. But that dominance has painted a target on the company's back, attracting billions in competitor investment from players with extremely deep pockets.

Google's Tensor Processing Units have evolved from internal tools to competitive alternatives that the company now offers to cloud customers. Amazon's Trainium chips are similarly positioned as cost-effective options for certain AI workloads. More concerning is the trend of hyperscalers designing custom silicon optimized for their specific needs. AMD's data center revenue grew 22 percent year over year to 4.3 billion dollars, while Broadcom's AI semiconductor revenue increased 74 percent to 6.5 billion dollars. While these competitors remain much smaller than Nvidia, their growth trajectories and the resources backing them suggest the competitive moat may be narrowing.

The competitive threat extends to unexpected quarters. In China, Huawei's Ascend 910C AI chip is reportedly very similar to Nvidia's H100, though no local chipmaker has yet developed a processor matching the H200's capabilities. If United States export restrictions tighten again, or if Chinese domestic chip development accelerates faster than expected, Nvidia could find itself locked out of the world's second-largest economy just as homegrown alternatives mature. The United Kingdom has also been investing heavily in domestic AI chip capabilities, though at a much smaller scale. The broader pattern is clear. Nvidia's success has convinced virtually every major technology power that semiconductor self-sufficiency is a national priority, a trend that can only intensify competition over time.

What the Smart Money Is Saying

Wall Street remains deeply divided on Nvidia's prospects for 2026, with analyst opinions spanning an unusually wide range for such a heavily covered stock. Analyst consensus estimates place Nvidia's average price target at 253.02 dollars, with a range from 140 dollars on the low end to 352 dollars on the high end. That 212-dollar spread reflects genuine uncertainty about fundamental questions: Will AI spending continue accelerating, plateau, or contract? Will Blackwell supply constraints ease or persist? Will China become a major growth driver or remain a minor factor?

The optimistic case rests on straightforward math. Goldman Sachs estimates that hyperscalers including Microsoft, Alphabet, Amazon, and Meta will spend roughly 500 billion dollars on AI capital expenditures in 2026, while McKinsey projects AI infrastructure will represent a 7 trillion dollar opportunity over the next five years. If those projections are even remotely accurate, Nvidia's current revenue levels represent a small fraction of total addressable market. Nvidia CEO Jensen Huang noted the company has a total order backlog of around 500 billion dollars for Blackwell, Rubin, and accompanying networking products, with an estimated 300 billion dollars expected to be recognized as revenue during 2026. These numbers suggest not just continued growth but accelerating growth that could push the stock significantly higher.

The bearish case is equally compelling. There are signs of cracks showing up in spending plans for major customers like OpenAI, Microsoft, and Oracle. If the return on investment from AI infrastructure fails to materialize as quickly as hoped, or if the technology plateau leaves companies with excess capacity, the current spending boom could reverse with shocking speed. Technology history offers numerous examples of boom-bust cycles in infrastructure spending, from the fiber optic overbuilding of the late 1990s to the server farm expansion and subsequent contraction of the early 2000s. Some analysts argue that if Nvidia's stock were to double in 2026, the company would approach a nearly 10 trillion dollar market capitalization, a level that would require nearly flawless execution and continued exponential growth.

The January 6 Mystery: CES 2026 and What It Reveals

As investors debate Nvidia's trajectory, an important data point looms on the calendar. CES 2026, the massive consumer electronics show in Las Vegas, begins on January 6 and could provide crucial signals about technology industry health and AI adoption momentum. Nvidia typically uses CES to showcase new products and partnerships, and 2026 will be no exception. The company is expected to detail its Rubin architecture, the successor to Blackwell that is scheduled to enter mass production in late 2026. Rubin is expected to move to TSMC's 3-nanometer process and utilize next-generation HBM4 memory, with projections suggesting it could offer another 2.5 times leap in performance, potentially reaching 50 petaflops per GPU.

Beyond Nvidia's own announcements, CES will reveal how quickly AI is transitioning from infrastructure investment to consumer application. If major consumer brands showcase AI-powered products built on Nvidia chips, it validates the bull case that AI infrastructure spending will generate returns justifying continued investment. If the show feels incremental, with more promises than delivered products, it could fuel concerns that the AI boom is more hype than substance. The automotive sector will be particularly important to watch. Nvidia has formed automotive partnerships with Toyota and other major manufacturers, and demonstrable progress in autonomous driving or AI-enhanced vehicle systems could open a massive new revenue stream beyond data centers.

The Memory Manufacturers Are Getting Rich Too

An often-overlooked dimension of Nvidia's stagnation is what it reveals about where value is accruing in the AI supply chain. Server manufacturers like Dell Technologies have raised their fiscal 2026 AI server revenue forecast to 20 billion dollars, citing a massive 14.4 billion dollar backlog that includes both H200 and Blackwell configurations. Supermicro, the liquid-cooled server specialist, has similarly seen its stock surge as it positions H200 racks as alternatives for customers unable to secure Blackwell allocations. Even TSMC, which manufactures Nvidia's chips, has expanded its advanced packaging capacity by 10 times since late 2023 to meet demand.

This supply chain prosperity matters for Nvidia's stock because it suggests the company may not capture as much of the AI infrastructure spending boom as investors once assumed. If memory manufacturers, server makers, and foundries are all raising prices and expanding margins, they are collectively taking a larger slice of the same revenue pie. Nvidia's dominance in chip design is unquestionable, but in a constrained supply environment, the suppliers of critical components gain leverage they would not otherwise possess. This is basic economics, but it runs counter to the narrative that positioned Nvidia as the singular beneficiary of AI investment.

What Makes This Different From Every Other Tech Boom

It is impossible to evaluate Nvidia's 2026 prospects without considering whether the AI boom itself is sustainable or destined to follow the pattern of previous technology manias. The parallels to the dot-com bubble are obvious enough that even bulls acknowledge them. Massive infrastructure investment based on projected future applications. Valuations that price in decades of growth. A land grab mentality where companies feel compelled to invest or risk being left behind. Skeptics point to these patterns and see an obvious bubble waiting to pop, with Nvidia positioned as the ultimate bubble stock.

Yet there are crucial differences that distinguish 2026 from 1999. The companies investing in AI infrastructure are not speculative startups burning venture capital but some of the most profitable corporations in history. Microsoft, Amazon, Google, and Meta generate hundreds of billions in revenue and tens of billions in free cash flow. Their AI investments, while massive, represent rational allocations of genuine profits rather than borrowed money chasing vague dreams. Perhaps more importantly, AI applications are already generating revenue. ChatGPT has hundreds of millions of paying subscribers. GitHub Copilot has transformed how millions of developers write code. AI-powered drug discovery has identified promising compounds in a fraction of the time traditional methods require. These are not theoretical applications waiting for the technology to mature, they are real products generating real returns today.

The counterargument is that current AI applications, while impressive, do not yet justify the scale of infrastructure investment. If the next five years fail to produce truly transformative applications beyond incremental improvements to chatbots and code assistants, the companies that have spent hundreds of billions on AI chips will face difficult questions from shareholders about return on capital. Nvidia cannot grow revenue at 62 percent year over year forever with over 50 billion dollars in quarterly revenue. At some point, possibly soon, the rate of infrastructure investment must slow. When it does, Nvidia's growth rate will compress dramatically, and the market's willingness to pay a premium valuation will evaporate.

The United Kingdom Wildcard

While most attention focuses on the United States and China, the United Kingdom has emerged as an unexpected factor in Nvidia's global strategy. Nvidia announced a 2 billion pound investment in the United Kingdom AI startup ecosystem, positioning itself as a partner in Britain's effort to become a leading AI power. This investment serves multiple purposes for Nvidia. It diversifies the company's geographic presence at a time when United States-China tensions create policy uncertainty. It provides access to world-class AI research coming from universities like Oxford and Cambridge. It potentially insulates Nvidia from future European Union regulations that could limit its operations.

The UK investment also reflects a broader pattern of nations treating AI infrastructure as strategic assets. Just as countries once competed to secure oil supplies or manufacturing capacity, they now compete for access to advanced computing resources. The era of Sovereign AI has seen nations like Japan and the UK scrambling to secure their own Blackwell allocations to avoid dependency on United States cloud providers. For Nvidia, this nationalization of AI infrastructure creates both opportunities and risks. On one hand, it expands potential customer base beyond the handful of American hyperscalers. On the other hand, it complicates supply allocation and introduces geopolitical considerations into what were once purely commercial decisions.

The Path Forward: Three Scenarios for 2026

As we move deeper into 2026, three distinct scenarios emerge for Nvidia's trajectory, each with different implications for investors trying to navigate the current uncertainty. The bull scenario assumes continued exponential growth in AI infrastructure spending, successful Blackwell production ramp, normalized relations with China leading to substantial H200 revenue, and the emergence of new AI applications that validate current investment levels. In this scenario, Nvidia stock could reach approximately 221 dollars per share by year-end, representing roughly 30 percent growth, with some analysts projecting even higher targets approaching 300 dollars if all factors align perfectly. This outcome requires nearly everything to go right, but it remains plausible given the tremendous momentum behind AI adoption.

The base scenario envisions moderate growth with periodic volatility. AI infrastructure spending continues but at a decelerating rate as supply catches up with demand. Blackwell ships in volume but faces margin pressure from component costs and increased competition. China relations remain uncertain, with H200 revenue coming in below initial projections due to Beijing's ambivalence. In this scenario, Nvidia stock trades in a range between 180 dollars and 220 dollars throughout 2026, delivering respectable but unspectacular returns that roughly match broader market performance. This is probably the most likely outcome, requiring neither everything to go right nor everything to go wrong, but rather a muddling through that reflects the genuine uncertainty about AI's medium-term trajectory.

The bear scenario sees one or more of several risks materializing. AI infrastructure spending slows sharply as companies realize returns on investment are lower than projected. A major Blackwell customer encounters financial difficulties or cancels orders. China tensions escalate, leading to a complete ban on chip exports that eliminates a potential growth driver. New competition from Broadcom, AMD, or hyperscaler internal chips proves more effective than expected, eroding Nvidia's market share. Memory shortages persist longer than anticipated, constraining revenue growth. In this scenario, Nvidia stock could decline to the 140 to 160 dollar range, representing a 20 to 30 percent drop from current levels. While painful, this would not constitute a crash so much as a repricing that brings valuation more in line with a mature, slower-growth company.

What Investors Should Watch in the Months Ahead

Rather than attempting to predict which scenario will unfold, investors would be better served monitoring specific indicators that will clarify Nvidia's direction before it is reflected in the stock price. The most important is quarterly guidance. If Nvidia's Q1 2026 revenue guidance, reported in late February, shows acceleration rather than deceleration in growth rates, it would strongly suggest the company is navigating supply constraints successfully. Conversely, if guidance disappoints or management commentary becomes more cautious, it could signal real demand weakness emerging.

China developments will be equally critical. The entire H200 export plan is contingent on government approval from Beijing, with significant uncertainty remaining about whether purchases will be permitted. If China approves large-scale imports in Q1 2026, it could add billions in near-term revenue and validate the bull case. If Beijing blocks purchases or imposes onerous conditions like mandatory bundling with domestic chips, it removes a major potential growth driver. Customer commentary from Microsoft, Amazon, Google, and Meta during their earnings calls will provide insight into whether AI infrastructure spending remains on track or is beginning to slow. Any indication of digestion period or ROI disappointment would be negative for Nvidia.

Memory pricing trends deserve close attention. If HBM prices stabilize or decline from their current elevated levels, it would ease pressure on Nvidia's margins and potentially allow for price cuts that could stimulate demand. If memory prices continue rising through 2026, margin compression becomes increasingly likely. Finally, the success or failure of AI applications in gaining mainstream adoption will ultimately determine the sustainability of infrastructure investment. If breakthrough applications emerge that justify the trillions being invested, Nvidia's platform becomes more entrenched. If AI remains primarily the domain of early adopters and technology enthusiasts, the addressable market may be smaller than current bulls assume.

The stagnation of Nvidia stock since July 2025 is not a repudiation of the AI revolution but a reflection of the market's growing sophistication in evaluating it. After three years of buying any AI exposure at any price, investors are now demanding answers to harder questions. Can growth rates be sustained? Are valuations reasonable? Is the competitive moat durable? These are precisely the questions that should be asked about any investment, no matter how compelling the underlying story. Nvidia's six-month pause is not weakness but maturation, the transition from momentum stock to blue chip that must prove itself quarter after quarter. For investors, this creates a more challenging but ultimately healthier evaluation framework. The stock may no longer double annually, but sustainable 20 to 30 percent returns backed by genuine earnings growth and technological leadership remain entirely achievable. In 2026, Nvidia faces its first real test not as a growth story but as a growth company, and how it navigates this transition will determine not just the stock price but the company's place in technology history.

As January 2026 unfolds, Nvidia stands at an inflection point that transcends typical corporate challenges. The company has successfully transformed from gaming periphery to AI central nervous system, achieving a scale and profitability that places it among the most successful technology companies ever created. Yet scale brings scrutiny, and success invites competition. The sideways movement in Nvidia stock since July is not failure but recognition that the easy part is over. Proving you can dominate a nascent market is one thing. Proving you can maintain that dominance as the market matures, competitors mobilize, and governments intervene is something altogether different.

The good news for shareholders is that Nvidia enters this next phase from a position of tremendous strength. The company generates 50 billion dollars in quarterly revenue with 70 percent-plus gross margins, metrics that would be remarkable for a company one-tenth its size. Its technology leadership remains undisputed, with Blackwell representing the state of the art in AI acceleration and Rubin promising another leap forward. Its customer relationships with every major technology platform ensure privileged insight into market direction. Its supply chain advantages, hard-won through years of investment and partnership, create barriers to entry that go far beyond chip design. These are real, durable advantages that provide a foundation for continued success even as growth rates normalize.

The challenge is that these advantages must be continuously defended and renewed in an industry where technological leadership can evaporate with surprising speed. The semiconductor industry is littered with companies that dominated for a time before being displaced by faster, cheaper, better alternatives. Intel, once considered as invincible as Nvidia appears today, spent the last decade watching its market leadership erode to TSMC and AMD. Qualcomm controlled mobile chips absolutely until ARM-based alternatives emerged. Even within AI specifically, the landscape has shifted dramatically in just three years, and another three years could bring changes we cannot yet imagine. Nvidia's task in 2026 and beyond is to avoid the complacency that has undone so many technology leaders before it.

For investors trying to decide whether to buy, hold, or sell Nvidia stock at current levels, the answer depends less on predictions about the next quarter than on beliefs about the next decade. If you believe AI will continue transforming industry after industry, that data center capital expenditures will grow to the trillions of dollars annually that management projects, that Nvidia's platform advantages will compound rather than erode, then a stock trading at 46 times earnings generating 57 percent earnings growth is not expensive. If you believe AI hype has exceeded reality, that much of current infrastructure investment will prove wasteful, that competition will erode both market share and margins, then even current valuations look vulnerable to significant correction. Neither view is demonstrably wrong, both are backed by legitimate analysis, and the truth likely lies somewhere between the extremes.

What seems certain is that Nvidia in 2026 will be a different investment than Nvidia in 2023 or 2024. The days of easy triple-digit returns are almost certainly behind us. What lies ahead is something simultaneously more mundane and more interesting. Can the world's most valuable company by market capitalization prove it deserves that valuation through consistent execution across multiple product cycles? Can it navigate geopolitics without sacrificing growth? Can it maintain technological leadership while dozens of well-funded competitors attack its position? These questions will be answered not through a single quarter's results but through years of competitive battle. The stock price will follow, but with more volatility and less certainty than the one-way ride investors enjoyed from 2023 to mid-2025. For those willing to accept that volatility in exchange for exposure to the company best positioned to benefit from AI's continued expansion, Nvidia remains compelling. For those who prefer steadier returns with less drama, the sideways movement since July suggests the market is still deciding what comes next, and patience might yet be rewarded with a better entry point.